The AI Tool Nobody Talks About: When Manual Processes Actually Win

Sometimes the best AI tool is no AI tool at all. Learn to recognize situations where traditional approaches deliver better results than automation.

Article Content

A boutique consulting firm spent three months implementing an AI-powered project management system, training their team, customizing workflows, and migrating historical data. After six months of use, they quietly returned to their previous manual process: a shared spreadsheet, weekly team meetings, and direct client communication. The AI system had automated their workflow, but it had also eliminated the informal conversations, relationship building, and contextual understanding that made their consulting valuable. Clients felt more distant, team members felt less connected to projects, and the firm's distinctive collaborative culture had eroded.

This isn't a story about a bad AI tool—the system worked exactly as designed. It's a story about recognizing that some processes shouldn't be automated, even when automation is technically possible. The consulting firm's project management process wasn't just about tracking tasks and deadlines—it was a vehicle for team collaboration, client relationship building, and knowledge sharing. Automating it optimized the wrong things.

When Relationships Matter More Than Efficiency

Client-facing processes that build relationships, establish trust, and demonstrate personal attention often suffer when automated. A wealth management firm implemented an AI tool to generate personalized investment recommendations, expecting to serve more clients with less effort. Instead, client satisfaction dropped and assets under management declined. Clients didn't want "personalized" AI-generated advice—they wanted their advisor's personal attention, judgment, and relationship.

The AI tool technically provided better investment recommendations based on quantitative analysis. But wealth management isn't purely a quantitative discipline—it's a relationship business where clients value personal connection, emotional support during market volatility, and the confidence that comes from working with a trusted human advisor. The AI tool optimized for analytical accuracy while undermining the relationship dynamics that actually drove client value.

Evaluate whether your process serves primarily transactional or relational purposes before automating it. Transactional processes—data entry, routine calculations, standardized communications—often benefit from automation. Relational processes—client relationship building, team collaboration, mentorship, creative brainstorming—often suffer when automated because the human interaction is the value, not a means to an end.

This doesn't mean AI tools have no place in relationship-oriented work. They can handle supporting tasks—scheduling, data analysis, research—that free professionals to focus on relationship building. But automating the relationship-building activities themselves typically backfires. A financial advisor might use AI to analyze portfolio performance but should personally discuss recommendations with clients. A consultant might use AI to research industry trends but should personally facilitate strategy discussions with clients.

When Context and Judgment Trump Speed and Scale

A law firm implemented an AI contract review tool to accelerate due diligence for M&A transactions. The tool flagged standard risk clauses accurately and quickly, but it consistently missed the subtle contextual issues that experienced attorneys immediately recognized: unusual party relationships, inconsistent definitions across documents, and implications of specific jurisdiction choices. The AI tool made junior attorneys faster at finding standard issues, but it didn't make them better at exercising judgment about non-standard situations.

AI tools excel at pattern matching and standardized analysis. They struggle with context-dependent judgment, especially in situations involving ambiguity, competing priorities, or novel circumstances. A hiring manager using an AI resume screening tool might efficiently filter candidates based on keywords and qualifications, but the tool can't assess cultural fit, growth potential, or the intangible qualities that distinguish good candidates from great ones.

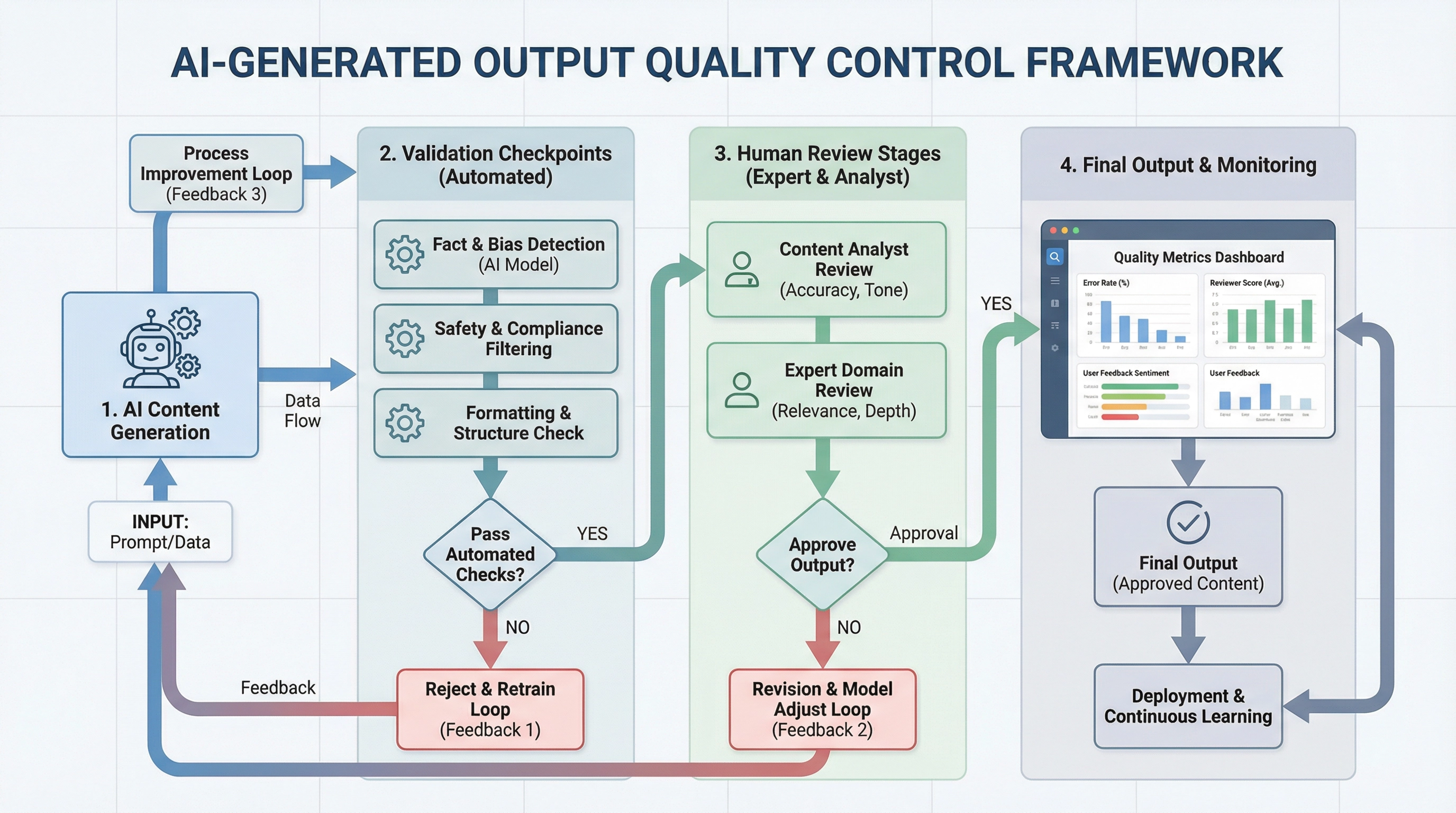

Recognize the limits of AI tools in judgment-intensive work. They can handle the mechanical aspects—data gathering, pattern identification, initial screening—but they can't replace human judgment about what the patterns mean, which factors matter most in a specific context, or how to balance competing considerations. Use AI tools to inform judgment, not replace it.

For complex decision-making processes, consider a hybrid approach where AI tools handle structured analysis while humans focus on contextual interpretation and final judgment. A medical team might use AI to identify potential diagnoses based on symptoms and test results, but physicians make the final diagnostic decision considering patient history, risk factors, and clinical judgment. This division of labor leverages AI's analytical capabilities while preserving human expertise where it matters most.

When Learning and Development Are the Goal

A software development bootcamp experimented with AI coding assistants to help students complete projects faster. Student project completion rates increased, but job placement rates declined. Employers reported that graduates couldn't solve problems independently, debug code effectively, or understand fundamental programming concepts. The AI tools had helped students produce code without developing the underlying skills that employers valued.

Using AI tools to accelerate learning often undermines the learning itself. The struggle, experimentation, and problem-solving that feel inefficient are actually the mechanisms through which people develop expertise. An AI writing tool that helps a junior marketer produce polished content quickly prevents them from developing writing skills through practice and feedback. An AI data analysis tool that automatically generates insights prevents an analyst from developing the pattern recognition and critical thinking skills that distinguish expert analysts from novices.

When the goal is skill development rather than output production, manual processes typically deliver better results than AI-assisted workflows. A graphic designer learning composition principles benefits more from manually arranging elements and receiving critique than from using an AI tool that automatically generates layouts. A financial analyst learning valuation techniques benefits more from building models from scratch than from using an AI tool that automates the calculations.

This doesn't mean AI tools have no place in learning environments. They can provide examples, suggest approaches, and offer feedback. But they shouldn't replace the practice and struggle that build expertise. A music student might use AI to analyze professional performances but should practice their instrument without AI assistance. A writer might use AI to research topics but should write drafts manually to develop their voice and style.

When Creativity and Originality Are Essential

A design agency adopted AI image generation tools to accelerate concept development for client pitches. Their pitch win rate dropped by 40%. Clients reported that the agency's concepts felt generic, derivative, and similar to what they'd seen from other agencies. The AI tools had made the agency faster at producing concepts, but those concepts lacked the originality and creative insight that had differentiated the agency's work.

AI tools trained on existing work inherently produce outputs that reflect patterns in their training data. They excel at recombining existing ideas in new ways, but they struggle with genuine originality—the kind of creative leap that comes from deep domain expertise, cross-disciplinary thinking, or challenging fundamental assumptions. A marketing campaign that needs to break through noise and capture attention requires creative originality that AI tools trained on existing campaigns can't provide.

Recognize when your work requires genuine creativity versus when it requires competent execution of established patterns. AI tools work well for the latter—producing variations on proven concepts, adapting successful approaches to new contexts, or executing technical aspects of creative work. They struggle with the former—developing breakthrough ideas, challenging category conventions, or creating work that establishes new patterns rather than following existing ones.

For creative work, consider using AI tools for research, exploration, and technical execution while reserving the core creative decisions for human judgment. A product designer might use AI to generate variations on a design direction but should personally develop the initial direction based on user research and creative insight. A copywriter might use AI to test different phrasings but should personally develop the core message and positioning strategy.

When Transparency and Explainability Are Required

A healthcare system implemented an AI tool to prioritize patient cases for specialist review. The tool improved efficiency by 30%, but it created a serious problem: when physicians questioned why certain cases were prioritized over others, the AI tool couldn't explain its reasoning in clinically meaningful terms. The system worked, but its opacity undermined physician trust and created potential liability if prioritization decisions were ever challenged.

Many AI tools operate as black boxes—they produce outputs without providing transparent reasoning that humans can verify, challenge, or learn from. This opacity creates problems in contexts where decisions need to be explained, justified, or audited. A loan officer using an AI credit scoring tool might need to explain to an applicant why their application was denied. A hiring manager using an AI candidate screening tool might need to defend their selection process against discrimination claims. An investment advisor using an AI portfolio optimization tool might need to justify recommendations to regulators.

When transparency and explainability are essential, manual processes or transparent algorithmic approaches often work better than opaque AI tools. A credit decision based on clear, documented criteria can be explained and defended. An AI credit decision based on complex neural network patterns might be more accurate but can't be explained in terms that satisfy regulatory requirements or customer expectations.

If you must use AI tools in contexts requiring explainability, prioritize tools that provide interpretable outputs: showing which factors influenced decisions, highlighting confidence levels, and enabling human review of reasoning. Some AI tools are designed specifically for regulated industries where explainability is required—these tools sacrifice some accuracy for interpretability, but they provide the transparency that high-stakes decisions demand.

Making the Right Choice: AI Tool or Manual Process?

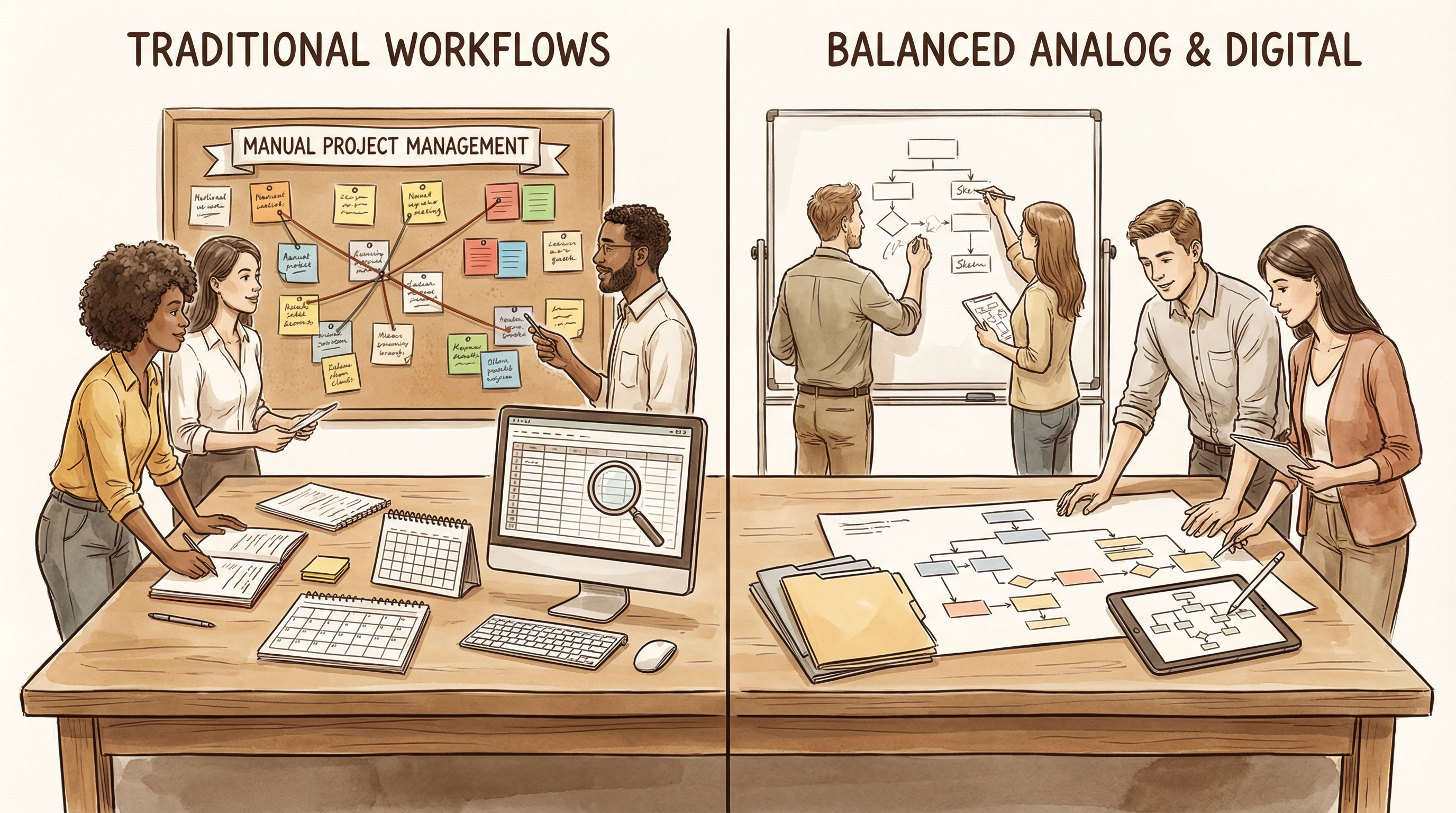

The decision to automate a process with AI tools should consider more than just efficiency gains. Ask whether the process serves primarily transactional or relational purposes. Evaluate whether context and judgment are more important than speed and scale. Consider whether the goal is output production or skill development. Assess whether creativity and originality are essential. Determine whether transparency and explainability are required.

When these factors point toward preserving manual processes, resist the pressure to automate just because automation is possible. Sometimes the best AI tool is no AI tool at all. The consulting firm that returned to manual project management, the law firm that limits AI contract review to supporting roles, and the design agency that uses AI for research but not core creative work all recognized that certain processes deliver more value when humans remain central to them.

This doesn't mean rejecting AI tools entirely—it means using them strategically where they add value while preserving manual processes where human involvement is the value. The most successful teams don't automate everything they can—they automate what they should while keeping humans in the loop where human judgment, creativity, relationships, and expertise matter most.

For guidance on identifying which processes benefit from AI automation versus manual approaches, explore our detailed use case analysis or review our evaluation methodology that helps teams make informed decisions about AI tool adoption.