When AI Tools Actually Slow You Down: Three Warning Signs Most Teams Miss

Adopting AI tools without proper evaluation can create more problems than solutions. Learn to identify the critical warning signs before they impact your team's productivity.

Article Content

Last quarter, a mid-sized marketing agency invested heavily in three different AI writing tools, expecting to double their content output. Instead, their delivery timeline stretched by 40%, client revisions tripled, and two senior writers threatened to quit. The problem wasn't the tools themselves—it was how the agency approached adoption.

This scenario plays out across industries every week. Organizations rush to implement AI solutions, driven by competitive pressure or executive mandates, without addressing fundamental questions about workflow integration, team readiness, or output quality standards. The result? Tools that promise efficiency end up creating bottlenecks, confusion, and frustration.

The Integration Trap: When New Tools Break Existing Workflows

Most AI tool failures stem from a mismatch between the tool's design assumptions and your team's actual working patterns. A sales team that relies on rapid-fire Slack communication will struggle with an AI assistant that requires structured email-style prompts. A design team accustomed to iterative visual feedback loops won't thrive with text-based AI tools that demand precise upfront specifications.

Before adopting any AI tool, map your team's current workflow in granular detail. Document how information moves between team members, where decisions get made, and which steps consume the most time. Then compare this reality against the tool's intended use case. If the tool requires your team to fundamentally change how they work—rather than enhancing existing patterns—you're setting up for resistance and reduced productivity.

Consider a content team that produces blog posts through a collaborative process: writers draft, editors refine structure, SEO specialists optimize keywords, and designers create visuals. An AI writing tool that generates complete articles in isolation disrupts this collaborative flow, potentially eliminating the value each specialist brings. A better fit might be an AI tool that assists each role within their existing responsibilities—helping writers overcome blank page syndrome, suggesting structural improvements to editors, or identifying keyword opportunities for SEO specialists.

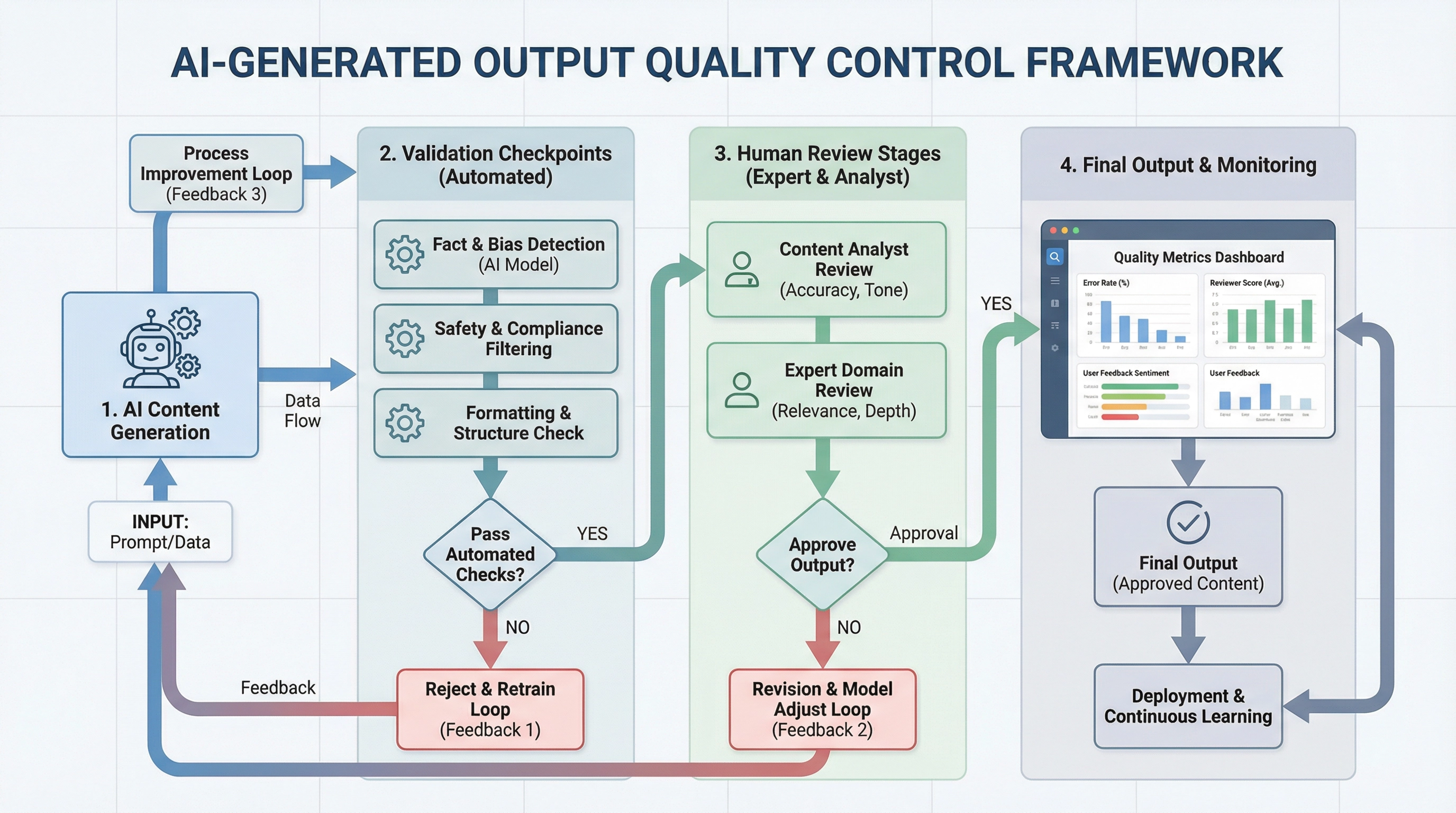

The Quality Control Crisis: When AI Output Requires More Review Than Manual Work

A financial services firm implemented an AI tool to generate client reports, expecting to save their analysts 10 hours per week. Instead, analysts spent 15 hours weekly reviewing and correcting AI-generated content for accuracy, compliance, and tone. The tool technically worked—it produced reports—but it created more work than it eliminated.

This quality control trap emerges when organizations underestimate the expertise required to verify AI output. In fields with high accuracy requirements—legal, medical, financial, technical—the cognitive load of reviewing AI-generated content often exceeds the effort of creating it manually. Experts must not only check facts but also identify subtle errors, missing context, and inappropriate framing that could have serious consequences.

Evaluate potential AI tools by testing them on real work samples and measuring the time required for thorough review. If your subject matter experts spend more than 60% of the original task time reviewing AI output, the tool isn't delivering efficiency gains. This threshold accounts for the mental effort of verification, which research shows is more cognitively demanding than creation for complex tasks.

Quality control becomes even more critical when AI tools are used for client-facing or compliance-sensitive work. A single error in an AI-generated legal brief, medical summary, or financial analysis can damage reputation and create liability. Teams must establish clear review protocols, assign responsibility for verification, and maintain documentation of the review process. If these quality control measures consume more resources than the AI tool saves, you're better off sticking with manual processes or finding tools better suited to your quality requirements.

The Hidden Costs: Training, Maintenance, and Team Resistance

The sticker price of an AI tool rarely reflects its true cost. A customer service team adopted an AI chatbot platform for $500 monthly, only to discover they needed $3,000 in monthly consulting fees to maintain conversation flows, plus 20 hours of internal staff time for weekly updates. The total cost of ownership exceeded their previous solution by 300%.

Calculate the full cost of AI tool adoption by including training time, ongoing maintenance, integration with existing systems, and the productivity dip during the learning curve. Most teams experience a 20-30% productivity decrease in the first month of AI tool adoption as they learn new interfaces, adjust workflows, and troubleshoot issues. Factor this temporary slowdown into your timeline and resource planning.

Team resistance represents another hidden cost that organizations consistently underestimate. When employees perceive AI tools as threats to their job security or professional identity, they'll find ways to work around them, ignore them, or sabotage implementation. A graphic design team forced to use an AI image generator might produce technically compliant work that lacks the creative excellence clients expect, effectively undermining the tool's value.

Address resistance through transparent communication about how AI tools will augment rather than replace human expertise. Involve team members in tool selection and implementation planning. Identify early adopters who can demonstrate value and mentor colleagues. Create feedback channels where team members can voice concerns and suggest improvements. Most importantly, tie AI tool adoption to clear benefits for individual team members—reduced tedious work, more time for creative tasks, or enhanced skill development—rather than just organizational efficiency metrics.

The Path Forward: Strategic AI Tool Evaluation

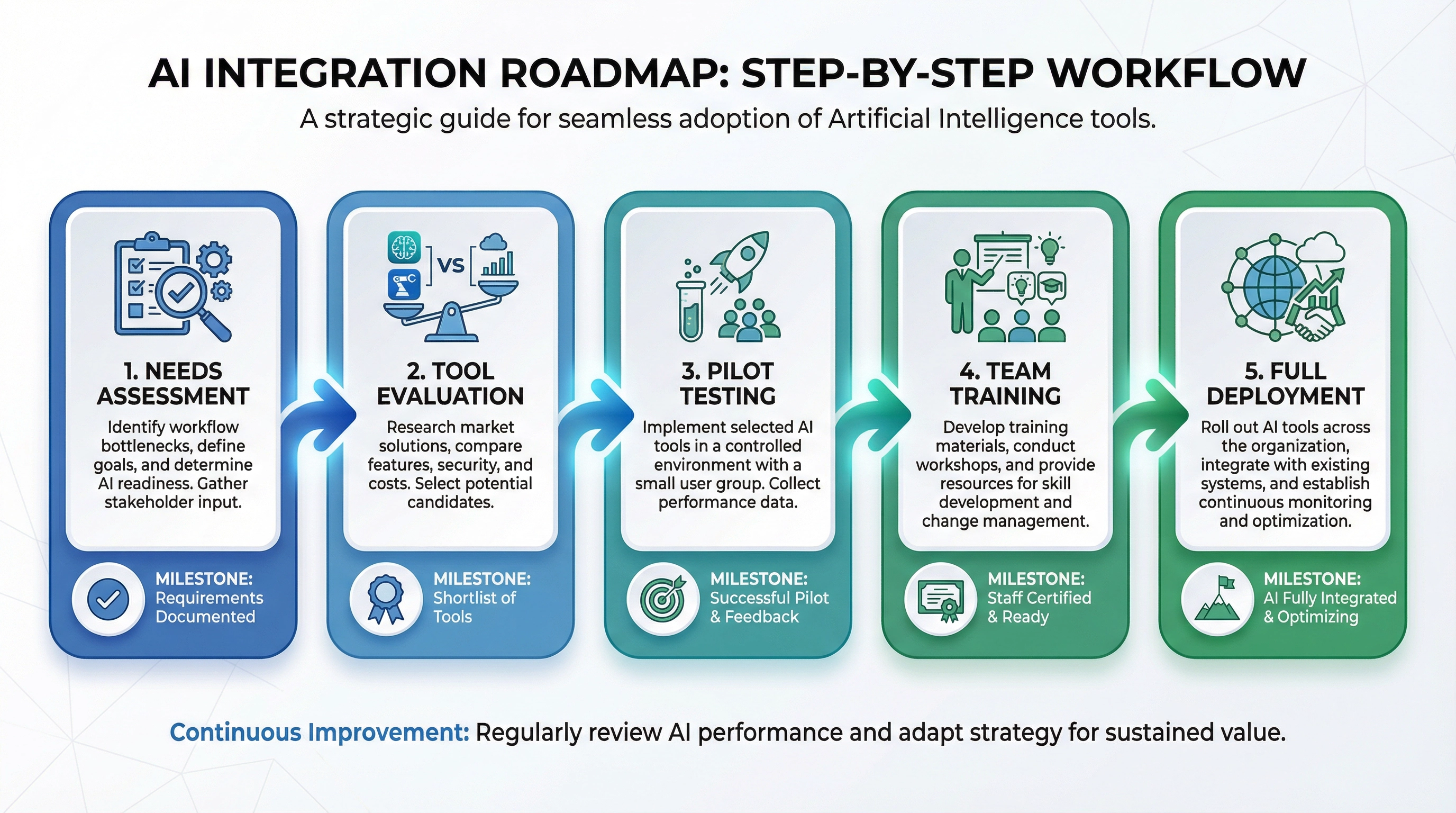

Successful AI tool adoption requires a structured evaluation process that goes beyond feature checklists and demo presentations. Start with a pilot program involving a small team working on non-critical projects. Measure specific metrics: time spent on tasks before and after AI tool adoption, error rates, revision cycles, and team satisfaction scores.

Set clear success criteria before the pilot begins. Define what "faster" or "better" means in quantifiable terms. A content team might measure success as "30% reduction in time from draft to publication while maintaining current quality standards and team satisfaction above 7/10." Without specific metrics, you'll struggle to determine whether the tool delivers value or just creates the illusion of progress.

Pay attention to edge cases and failure modes during your pilot. AI tools often perform well on straightforward tasks but struggle with exceptions, nuance, and context-dependent decisions. A customer service AI might handle routine inquiries effectively but escalate complex issues inappropriately, creating more work for human agents. Test your AI tool against your most challenging scenarios, not just typical use cases.

Finally, maintain flexibility in your AI tool strategy. The right tool for your team today might not serve you well as your needs evolve. Build evaluation and reassessment into your ongoing workflow. Check in quarterly to assess whether the tool still delivers value, whether better alternatives have emerged, and whether your team's needs have changed. The AI tool landscape evolves rapidly—your evaluation process should keep pace.

Making the Right Call

AI tools offer genuine value when they align with your team's workflow, quality standards, and capabilities. But rushing adoption without proper evaluation creates more problems than it solves. Watch for the warning signs: workflow disruption, quality control burdens, and hidden costs. When you spot these red flags, pause and reassess rather than pushing forward with a tool that isn't serving your needs.

The most successful AI tool implementations share common characteristics: they enhance existing workflows rather than replacing them, they reduce rather than increase quality control burden, and they deliver measurable value that exceeds their total cost of ownership. Use these criteria to guide your evaluation, and you'll avoid the productivity traps that derail so many AI adoption efforts.